In a bygone era (mid-1990s) technical SEO fell into the realm of developers, webmasters and velociraptors.

All you really needed to do was submit your site for indexing, add the keyword meta tag, tweak HTML and let the spider do the rest of the work.

As search algorithms got better, these technical moves became obsolete and other, more relevant methods adopted for better search results.

Fast forward to today where SEO requires strategy, technical expertise, content skills and PR chops.

Speaking of technical expertise, there are still a number of technical factors that can deep six a site and keep pages from ranking.

Usually, technical factors can be fixed relatively quickly and can turn a site’s visibility around. Also, in my mind, technical factors are things that involve programatic knowledge and not things that are content related (potato patato).

Housekeeping Items

- Install Google Analytics and Google Webmaster Tools and get them linked together. Using both, you’ll be able to monitor site changes pre and post technical.You’ll also be able to examine traffic, crawl errors and easily submit your site and sitemaps to the index.

The Checklist

- To www or not to www – Go to your browser and type your domain with www. and without, what do you see? If your site resolves with and without www, then you need to do a redirect. Why? Your site’s overall trust can be diluted by splitting your link profile and indexing in half. Search engines will see them as separate when they’re really the same.

- Have a united front – If you’ve got multiple domains resolving to one webhost, then 301 redirect that traffic to the main domain. For example, you may have misspelling domains or used to use another domain and just left it around. Pulling the traffic together gives your site a united front for search engines.

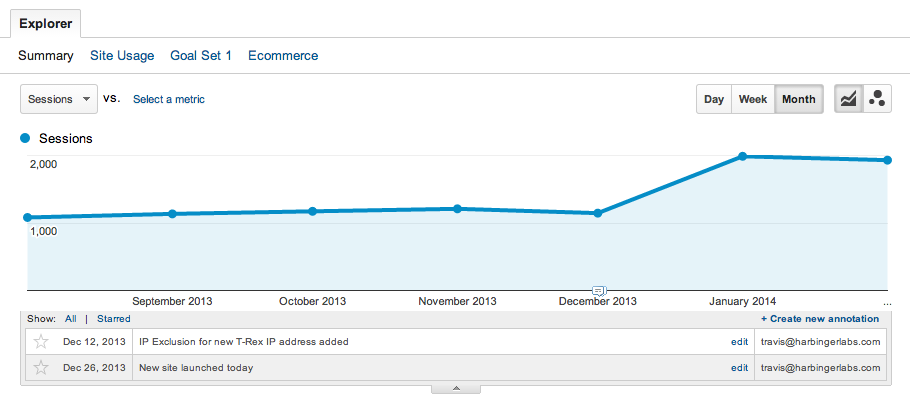

Organic site traffic after URLs were redirected into one. - Header codes – Crawl your pages and look for the passed header status code. An ‘all good’ code is 200, while a ‘page not found’ is 404. Commonly I see error pages that should be 404, pass 200 header status codes which makes them tricky to track down and could be leading to a bad UX.

- Canonicalization – This long, confusing word is another way of saying ‘there are a few versions of the same page, let’s programmatically make that 1 version’.Say you have mysite.com/donkey.html?ver=1 and mysite.com/donkey.html?ver=2. To make this all one, you’ll need to add a canonical tag like so:

<link rel="canonical" href="http://mysite.com/donkey.html">

The canonical tag is telling search engines that both, dynamic versions are to be treated as one.

- Robots.txt nano nano – This simple text file can really help or really hurt your site depending on its contents. This file tells search engines what to crawl and what not to crawl and should be found in the top level directory. If there are sections or pages on the site you’d like to remain hidden (hmmm) then a properly setup robots.txt file can make that happen.

- Sitemap.xml, the nerd version – You’re probably aware of an HTML sitemap – the one in your footer with a list of pages on your site. But another, equally important one is your sitemap.xml.Sitemap.xml is another way to help the search engines understand what can be found on your site. Basically it lists URLs, modification dates and change frequency in a highly readable format for crawlers.Ideally the sitemap.xml file will automatically be updated when you publish a new blog post, update a page or unpublish a page. There are CMS plugins that make this easy, just do a Google search.

- Sitespeed – This is important. Sometimes your server, markup or architecture can put the brakes on page load times. Page speed is a big factor in ranking success and can be dramatically sped up using Google’s own tools. Check out Page Speed Insights or Pingdom’s Website Speed Test to see where you can improve.

Like I said before, proper technical setup is a big factor for SEO. Most of the above tweaks can be knocked out in a day by an experienced developer or SEO firm and can impact how your site is seen by search engines.

Just make sure you understand what you’re doing before pulling the trigger. And, once you do pull the trigger test, test test and keep a close eye on Webmaster Tools and Analytics to spot any problems.

Back to article list